What is 1000minds decision-making? Featured

A high-level overview of 1000minds decision-making

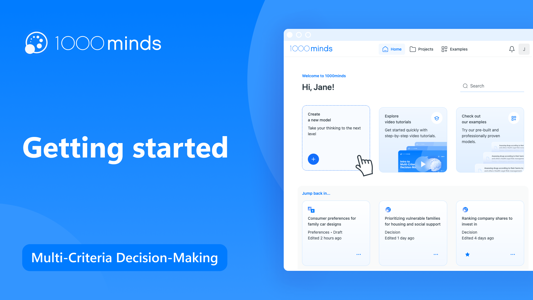

Watch our free, step-by step video tutorials to help you quickly get started with 1000minds.

A high-level overview of 1000minds decision-making

This is a brief introduction to conjoint analysis, including some background information about how/when it is used, and an overview about the main components, terminology, and results, including a conjoint analysis example.

Learn how to create a new project, decision, or survey, what the difference is between a decision and survey, how to delete & restore deleted items, and how to view our many examples to help you discover the plethora of applications for 1000minds.

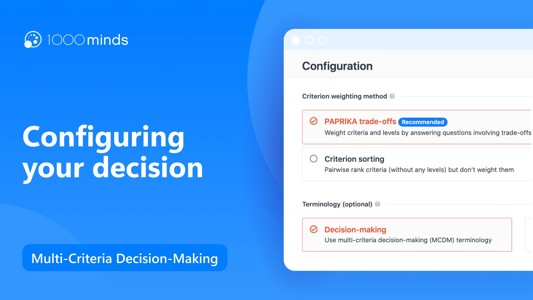

Learn how to configure your 1000minds decision, what the different criterion weighing methods are that 1000minds uses, and how to set your terminology.

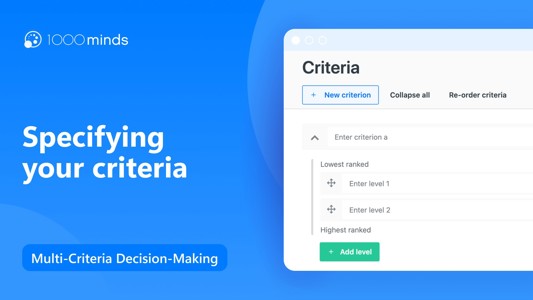

Learn what criteria are, and how to add criteria and levels to your 1000minds decision.

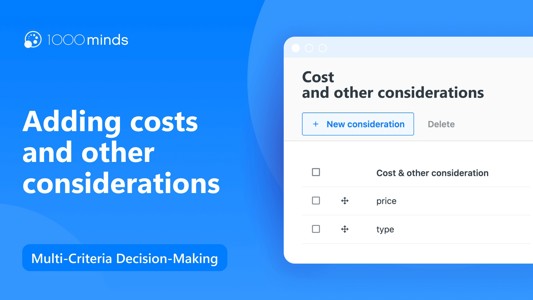

Your costs and other considerations are anything that isn't a benefit when it comes to your alternatives. For example, you might think about monetary costs, number of staff required, categories, or even arbitrary information such as contact informati

Impossible combinations are combinations of levels on the attributes to be excluded at the trade-offs page to avoid confusion. Specifying impossible combinations removes those combinations from the trade-offs and spares you from having to think about

Your alternatives are the different options you are choosing between when making a decision - e.g. houses to buy, projects to allocate staff to, etc. Here, we will look at how to add alternatives and edit the alternatives table.

Learn how to import alternatives from an Excel file into 1000minds. This allows you to conveniently use the 1000minds software with an existing dataset, without having to enter each alternative manually.

By making a series of trade-offs, 1000minds can help you determine the relative importance of each of your criteria. The simplest decision is one where there are only two choices, and that is what 1000minds does by asking you: “Which of these 2 alter

If you want to make a decision together as a group, you can easily gather everyone’s input using the voting feature. This encourages discussion and allows participants to better understand each other’s views.

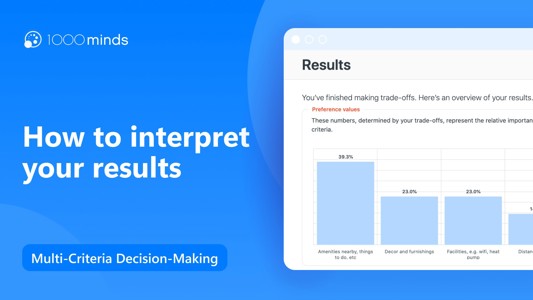

The results section in 1000minds provides you several different ways to view your results, including examining the preference values of your criteria, comparing your ranked alternatives, and factoring in costs and other considerations on a value for

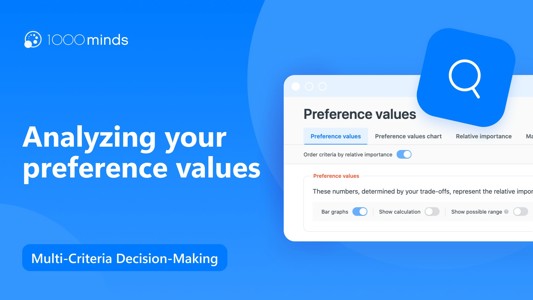

Discover more about your preferences and learn how to better understand the preference values of your criteria and their levels.

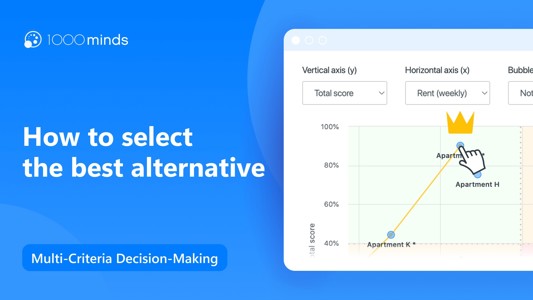

In this 1000minds tutorial, learn how to compare a subset of your ranked alternatives, understand why they scored the way they did, and think about how a slightly different performance or categorization in a criterion would affect your alternative's

The 1000minds value-for-money chart is a simple but effective tool to help you find the best value for your money when deciding between different alternatives. The chart allows you to factor any costs and other considerations into your decision.

Learn how to use the 1000minds value-for-money chart and alternative selection table to factor in costs, other considerations, and a budget to effectively make your best decisions.

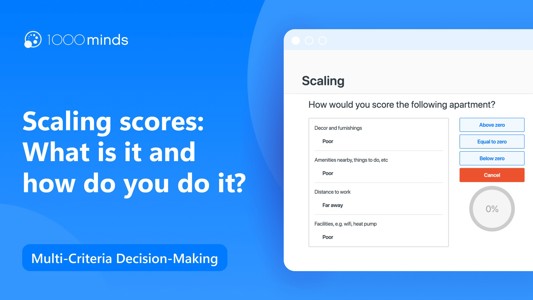

In this 1000minds video tutorial, learn why it matters to scale your scores and how to do it for your next decision. By scaling scores, you can set the minimum possible score of your alternatives to a value other than zero.

Learn how to get started with 1000minds conjoint analysis, by setting up and configuring your preferences survey.

Learn how to add attributes to a 1000minds conjoint analysis survey, and customize some features such as making the attributes self-explicated or optional for survey participants.

Learn how to manually add and edit alternatives, and customize them with pictures and descriptions for your conjoint analysis survey.

Learn how to import alternatives into 1000minds from an Excel file, so you can get started quickly using an existing list of alternatives.

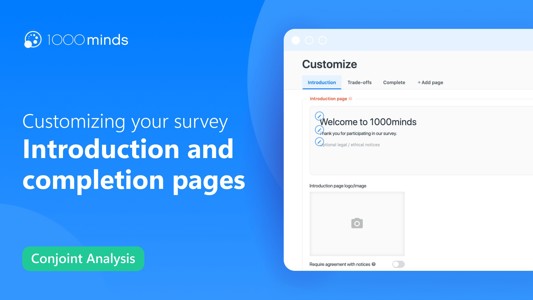

Learn how to customize the introduction and completion pages of your 1000minds survey.

Learn how to customize the trade-offs section of a 1000midns survey, including an introduction, title and subtitle, editing the button text, and adding or removing additional features on the trade-offs page.

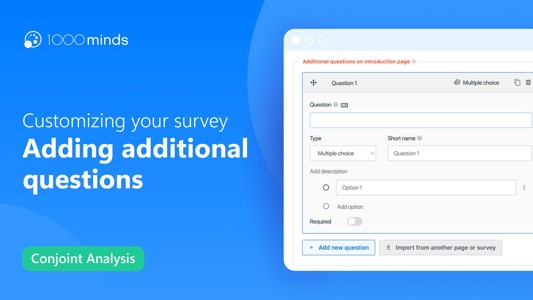

Find out how to add fully customizable questions to your 1000minds survey in addition to the trade-off questions. Whether it is multiple choice, short answer, numeric, or any other question you would like, 1000minds allows you to gather whatever info

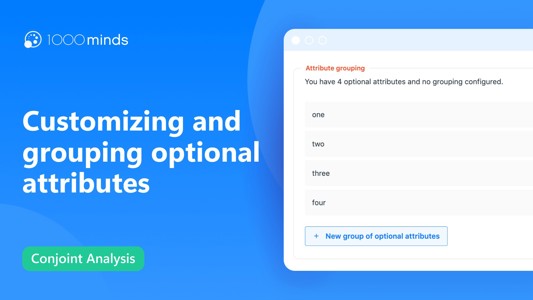

Learn how to allow survey participants to select which attributes they would like to include in their survey questions. This is useful if some of your attributes might not be relevant to all participants.

Learn how to set up your 1000minds survey to receive participants from a survey panel provider.

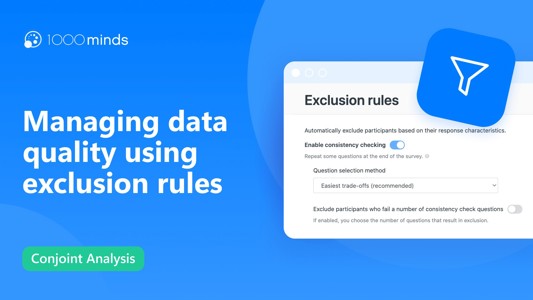

Learn how to improve the quality of your survey data by excluding participants who fail selected quality assurance tests.

Learn how to preview your 1000minds survey to ensure that everything is in place and the survey runs seamlessly.

Find out the best practices for publishing your survey, and learn how to close your survey after a specific time has passed or a number of responses has been received.

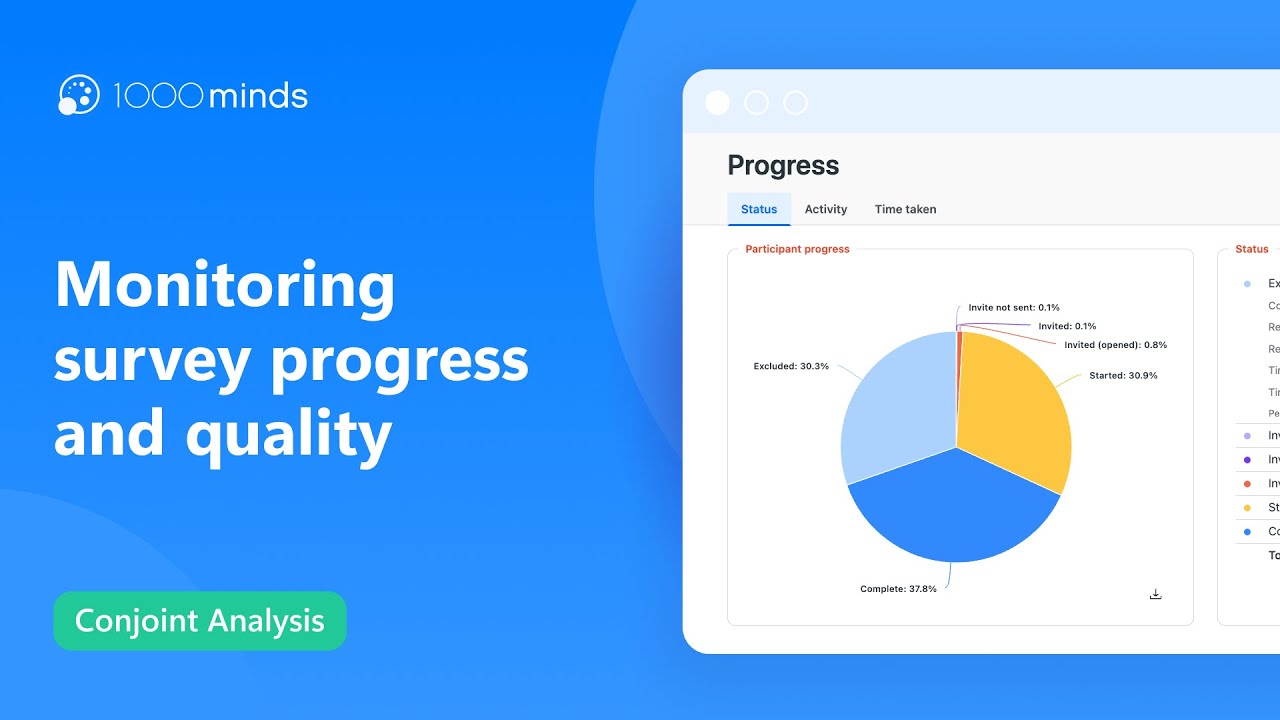

Learn how to monitor participant progress in your survey, how people performed on the exclusion rules, and how long people took to complete your survey.

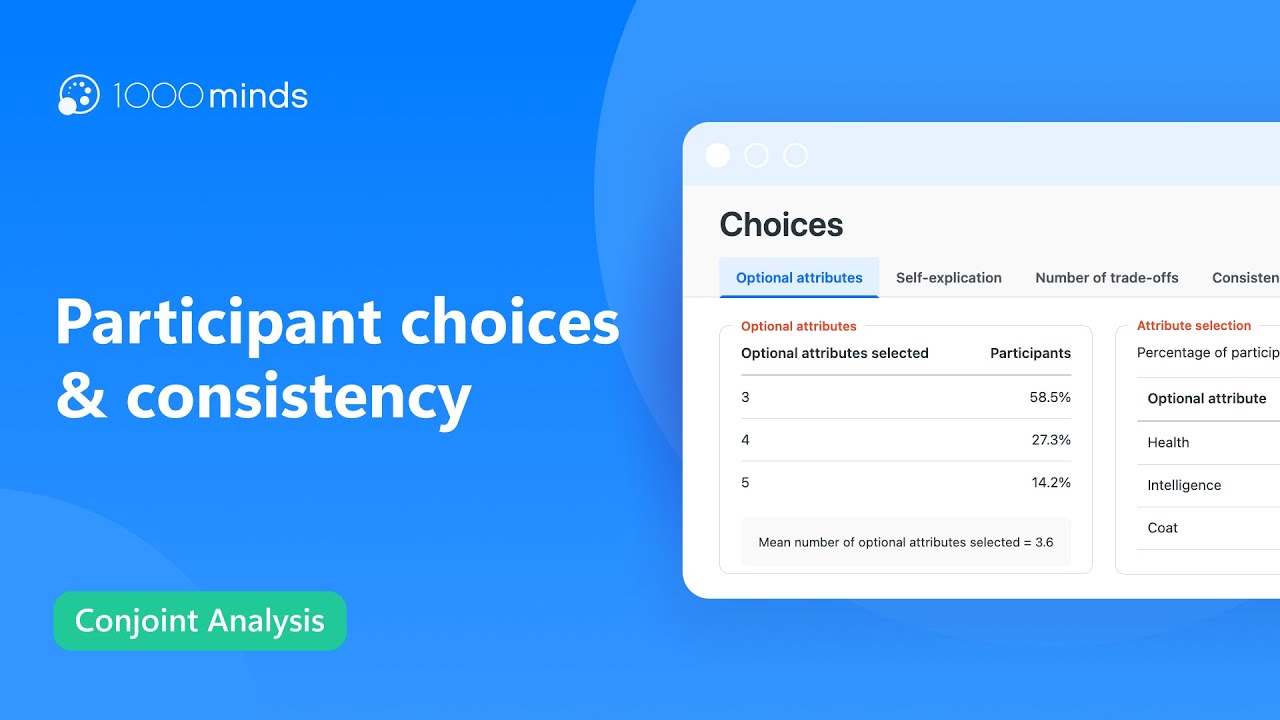

View participant choices regarding optional and self-explicated attributes, as well as the trade-offs made and consistency in participant responses. Interested in learning more? Check out our website: https://www.1000minds.com/?utm_source=youtube&ut

Learn how to understand the utilities of your attributes through the various graphs and charts on the 1000minds utilities page.

Learn how to understand participant preferences regarding your alternatives, how to analyze variation among participant preferences, and how to understand the market shares. Based on this information, you can craft the best solution for your particip

Can’t find what you’re looking for? Contact us.